Pantheon has long been hosting Drupal sites, and their entry into the WordPress hosting marketplace is quite welcome. For the most part, hosting WordPress sites on Pantheon is a dream for developers. Their command line tools and git-based development deployments, and automatic dev, test, live environments (with the ability to have multiple dev environments on some tiers) are powerful things. If you can justify the expense (and they’re not cheap), I would encourage you to check them out.

First, the good stuff:

Git-powered dev deployments

This is great. Just add their Git repo as a remote (you can still host your code on GitHub or Bitbucket or anywhere else you like), and deploying to dev is as simple as:

git push pantheon-dev master

Command-line deployment to test and live

Pantheon has a CLI tool called Terminus that can be used to issue commands to Pantheon (including giving you access to remote WP-CLI usage).

You can do stuff like deploy from dev to test:

terminus site deploy --site=YOURSITE --env=test --from=dev --cc

Or from test to live:

terminus site deploy --site=YOURSITE --env=live --from=test

Clear out Redis:

terminus site redis clear --site=YOURSITE --env=YOURENV

Clear out Varnish:

terminus site clear-caches --site=YOURSITE --env=YOURENV

Run WP-CLI commands:

terminus wp option get blogname --site=YOURSITE --env=YOURENV

Keep dev and test databases & uploads fresh

When you’re developing in dev or testing code in test before it goes to live, you’ll want to make sure things work with the latest live data. On Pantheon, you can just go to Workflow > Clone, and easily clone the database and uploads (called “files” on Pantheon) from live to test or dev, complete with rewriting of URLs as appropriate in the database.

No caching plugins

You can get rid of Batcache, W3 Total Cache, or WP Super Cache. You don’t need them. Pantheon caches pages outside of WordPress using Varnish. It just works (including invalidating URLs when you publish new content). But what if you want some control? Well, that’s easy. Just issue standard HTTP cache control headers, and Varnish will obey.

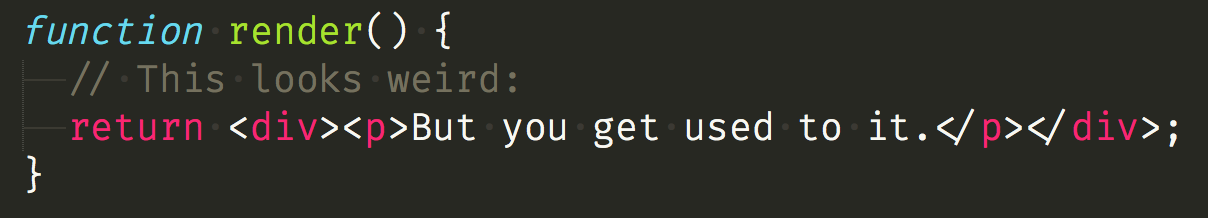

<?php

function my_pantheon_varnish_caching() {

if ( is_user_logged_in() ) {

return;

}

$age = false;

// Home page: 30 minutes

if ( is_home() && get_query_var( 'paged' ) < 2 ) {

$age = 30;

// Product pages: two hours

} elseif ( function_exists( 'is_product' ) && is_product() ) {

$age = 120;

}

if ( $age !== false ) {

pantheon_varnish_max_age( $age );

}

}

function pantheon_varnish_max_age( $minutes ) {

$seconds = absint( $minutes ) * 60;

header( 'Cache-Control: public, max-age=' . $seconds );

}

add_action( 'template_redirect', 'my_pantheon_varnish_caching' );

And now, some unclear stuff:

Special wp-config.php setup

Some things just aren’t very clear in Pantheon’s documentation, and using Redis for object caching is one of them. You’ll have to do a bit of work to set this up. First, you’ll want to download the wp-redis plugin and put its object-cache.php file into /wp-content/.

Update: apparently this next step is not needed!

Next, modify your wp-config.php with this:

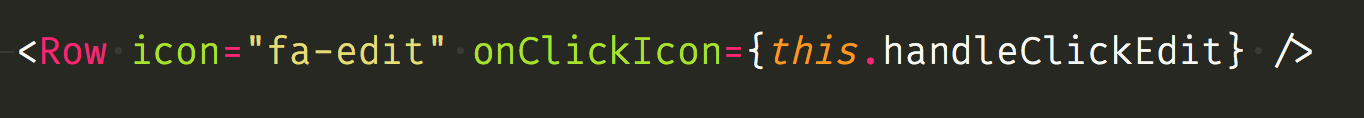

// Redis

if ( isset( $_ENV['CACHE_HOST'] ) ) {

$GLOBALS['redis_server'] = array(

'host' => $_ENV['CACHE_HOST'],

'port' => $_ENV['CACHE_PORT'],

'auth' => $_ENV['CACHE_PASSWORD'],

);

}

Boom. Now Redis is now automatically configured on all your environments!

Setting home and siteurl based on the HTTP Host header is also a nice trick for getting all your environments to play, but beware yes-www and no-www issues. So as to not break WordPress’ redirection between those variants, you should massage the Host to not be solidified as the one you don’t want:

// For non-www domains, remove leading www

$site_server = preg_replace( '#^www\.#', '', $_SERVER['HTTP_HOST'] );

// You're on your own for the yes-www version 🙂

// Set URLs

define( 'WP_HOME', 'http://'. $site_server );

define( 'WP_SITEURL', 'http://'. $site_server );

So, those environment variables are pretty cool, huh? There are more:

// Database

define( 'DB_NAME', $_ENV['DB_NAME'] );

define( 'DB_USER', $_ENV['DB_USER'] );

define( 'DB_PASSWORD', $_ENV['DB_PASSWORD'] );

define( 'DB_HOST', $_ENV['DB_HOST'] . ':' . $_ENV['DB_PORT'] );

// Keys

define( 'AUTH_KEY', $_ENV['AUTH_KEY'] );

define( 'SECURE_AUTH_KEY', $_ENV['SECURE_AUTH_KEY'] );

define( 'LOGGED_IN_KEY', $_ENV['LOGGED_IN_KEY'] );

define( 'NONCE_KEY', $_ENV['NONCE_KEY'] );

// Salts

define( 'AUTH_SALT', $_ENV['AUTH_SALT'] );

define( 'SECURE_AUTH_SALT', $_ENV['SECURE_AUTH_SALT'] );

define( 'LOGGED_IN_SALT', $_ENV['LOGGED_IN_SALT'] );

define( 'NONCE_SALT', $_ENV['NONCE_SALT'] );

That’s right — you don’t need to hardcode those values into your wp-config. Let Pantheon fill them in (appropriate for each environment) for you!

And now, some gotchas:

Lots of uploads = lots of problems

Pantheon has a distributed filesystem. This makes it trivial for them to scale your site up by adding more Linux containers. But their filesystem does not like directories with a lot of files. So, let’s consider the WordPress uploads folder. Usually this is partitioned by month. On Pantheon, if you start approaching 10,000 files in a directory, you’re going to have problems. Keep in mind that crops count towards this limit. So one upload with 9 crops is 10 files. 1000 uploads like that in a month and you’re in trouble. I would recommend splitting uploads by day instead, so the Pantheon filesystem isn’t strained. A plugin like this can help you do that.

Sometimes notices cause segfaults

I honestly don’t know what is going on here, but I’ve seen E_NOTICE errors cause PHP segfaults. Being segfaults, they produce no useful information in logs, and I’ve had to spend hours tracking down the code causing the issue. This happens reliably for given code paths, but I don’t have a reproducible example. It’s just weird. I have a ticket open with Pantheon about this. It’s something in their custom error handling. Until they get this fixed, I suggest doing something like this, in the first line of wp-config.php:

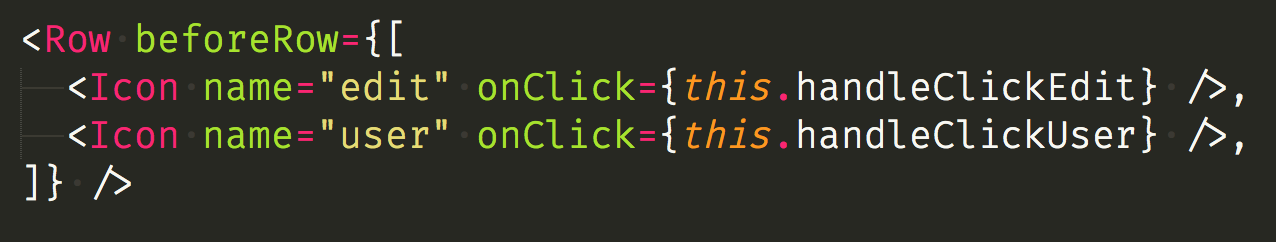

// Disable Pantheon's error handler, which causes segfaults

function disable_pantheon_error_handler() {

// Does nothing

}

if ( isset( $_ENV['PANTHEON_ENVIRONMENT'] ) ) {

set_error_handler( 'disable_pantheon_error_handler' );

}

This just sets a low level error handler that stops errors from bubbling up to PHP core, where the trouble likely lies. You can still use something like Debug Bar to show errors, or you could modify that blank error handler to write out to an error log file.

Have your own tips?

Do you have any tips for hosting WordPress on Pantheon? Let me know in the comments!

What is

What is